The theory of graph-cuts is used often in the field of computer vision. Graph-cuts are employed to efficiently solve a wide variety of computer vision problems, such as image smoothing, the stereo correspondence, and many other problems that can be formulated in terms of energy minimization. Hold on, energy minimization? It basically refers to finding the equilibrium state. We will talk more about it soon. Many computer vision algorithms involve transforming a given problem into a graph and cutting that graph in the best way. When we say “graph-cuts”, we are specifically referring to the models which use a max-flow/min-cut optimization. Too much jargon? Let’s just dissect it and see what’s inside, shall we? Continue reading “Graph-Cuts In Computer Vision”

The theory of graph-cuts is used often in the field of computer vision. Graph-cuts are employed to efficiently solve a wide variety of computer vision problems, such as image smoothing, the stereo correspondence, and many other problems that can be formulated in terms of energy minimization. Hold on, energy minimization? It basically refers to finding the equilibrium state. We will talk more about it soon. Many computer vision algorithms involve transforming a given problem into a graph and cutting that graph in the best way. When we say “graph-cuts”, we are specifically referring to the models which use a max-flow/min-cut optimization. Too much jargon? Let’s just dissect it and see what’s inside, shall we? Continue reading “Graph-Cuts In Computer Vision”

Tag: Graphs

What Are Conditional Random Fields?

This is a continuation of my previous blog post. In that post, we discussed about why we need conditional random fields in the first place. We have graphical models in machine learning that are widely used to solve many different problems. But Conditional Random Fields (CRFs) address a critical problem faced by these graphical models. A popular example for graphical models is Hidden Markov Models (HMMs). HMMs have gained a lot of popularity in recent years due to their robustness and accuracy. They are used in computer vision, speech recognition and other time-series related data analysis. CRFs outperform HMMs in many different tasks. How is that? What are these CRFs and how are they formulated? Continue reading “What Are Conditional Random Fields?”

This is a continuation of my previous blog post. In that post, we discussed about why we need conditional random fields in the first place. We have graphical models in machine learning that are widely used to solve many different problems. But Conditional Random Fields (CRFs) address a critical problem faced by these graphical models. A popular example for graphical models is Hidden Markov Models (HMMs). HMMs have gained a lot of popularity in recent years due to their robustness and accuracy. They are used in computer vision, speech recognition and other time-series related data analysis. CRFs outperform HMMs in many different tasks. How is that? What are these CRFs and how are they formulated? Continue reading “What Are Conditional Random Fields?”

Why Do We Need Conditional Random Fields?

This is a two-part discussion. In this blog post, we will discuss the need for conditional random fields. In the next one, we will discuss what exactly they are and how do we use them. The task of assigning labels to a set of observation sequences arises in many fields, including computer vision, bioinformatics, computational linguistics and speech recognition. For example, consider the natural language processing task of labeling the words in a sentence with their corresponding part-of-speech tags. In this task, each word is labeled with a tag indicating its appropriate part of speech, resulting in annotated text. To give another example, consider the task of labeling a video with the mental state of a person based on the observed behavior. You have to analyze the facial expressions of the user and determine if the user is happy, angry, sad etc. We often wish to predict a large number of variables that depend on each other as well as on other observed variables. How to achieve these tasks? What model should we use? Continue reading “Why Do We Need Conditional Random Fields?”

This is a two-part discussion. In this blog post, we will discuss the need for conditional random fields. In the next one, we will discuss what exactly they are and how do we use them. The task of assigning labels to a set of observation sequences arises in many fields, including computer vision, bioinformatics, computational linguistics and speech recognition. For example, consider the natural language processing task of labeling the words in a sentence with their corresponding part-of-speech tags. In this task, each word is labeled with a tag indicating its appropriate part of speech, resulting in annotated text. To give another example, consider the task of labeling a video with the mental state of a person based on the observed behavior. You have to analyze the facial expressions of the user and determine if the user is happy, angry, sad etc. We often wish to predict a large number of variables that depend on each other as well as on other observed variables. How to achieve these tasks? What model should we use? Continue reading “Why Do We Need Conditional Random Fields?”

Investors Are Unimpressed By Facebook’s Graph Search

Facebook unveiled its graph search recently. This announcement was highly anticipated and Facebook is banking on it to generate some revenue. While it is very good from a technological point of view, the unveiling didn’t exactly impress the investors. The stock prices actually went down after the announcement. Facebook really wants to create a source to generate sustainable revenues. What exactly are the investors looking for? Even though graph search looks promising, why aren’t the investors convinced yet? Continue reading “Investors Are Unimpressed By Facebook’s Graph Search”

Facebook unveiled its graph search recently. This announcement was highly anticipated and Facebook is banking on it to generate some revenue. While it is very good from a technological point of view, the unveiling didn’t exactly impress the investors. The stock prices actually went down after the announcement. Facebook really wants to create a source to generate sustainable revenues. What exactly are the investors looking for? Even though graph search looks promising, why aren’t the investors convinced yet? Continue reading “Investors Are Unimpressed By Facebook’s Graph Search”

Dynamic Programming

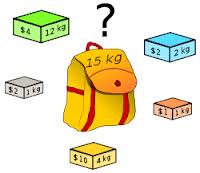

Most of the techies have come across this concept one time or the other. People know that it’s really good and very useful, but not a lot of them know how exactly it works and why it works in the first place! Let’s say you are presented with a big box of precious stones with different sizes and weights. You have a bag with you which can only hold a limited weight. So obviously you can’t take everything. In particular, you’re constrained to take only what your bag can hold. Let’s say it can only hold W pounds. You also know the market value for each of those stones. Given that you can only carry W pounds, what stones should you pick in order to maximize your profit? Continue reading “Dynamic Programming”

Most of the techies have come across this concept one time or the other. People know that it’s really good and very useful, but not a lot of them know how exactly it works and why it works in the first place! Let’s say you are presented with a big box of precious stones with different sizes and weights. You have a bag with you which can only hold a limited weight. So obviously you can’t take everything. In particular, you’re constrained to take only what your bag can hold. Let’s say it can only hold W pounds. You also know the market value for each of those stones. Given that you can only carry W pounds, what stones should you pick in order to maximize your profit? Continue reading “Dynamic Programming”