This is a two-part discussion. In this blog post, we will discuss the need for conditional random fields. In the next one, we will discuss what exactly they are and how do we use them. The task of assigning labels to a set of observation sequences arises in many fields, including computer vision, bioinformatics, computational linguistics and speech recognition. For example, consider the natural language processing task of labeling the words in a sentence with their corresponding part-of-speech tags. In this task, each word is labeled with a tag indicating its appropriate part of speech, resulting in annotated text. To give another example, consider the task of labeling a video with the mental state of a person based on the observed behavior. You have to analyze the facial expressions of the user and determine if the user is happy, angry, sad etc. We often wish to predict a large number of variables that depend on each other as well as on other observed variables. How to achieve these tasks? What model should we use?

This is a two-part discussion. In this blog post, we will discuss the need for conditional random fields. In the next one, we will discuss what exactly they are and how do we use them. The task of assigning labels to a set of observation sequences arises in many fields, including computer vision, bioinformatics, computational linguistics and speech recognition. For example, consider the natural language processing task of labeling the words in a sentence with their corresponding part-of-speech tags. In this task, each word is labeled with a tag indicating its appropriate part of speech, resulting in annotated text. To give another example, consider the task of labeling a video with the mental state of a person based on the observed behavior. You have to analyze the facial expressions of the user and determine if the user is happy, angry, sad etc. We often wish to predict a large number of variables that depend on each other as well as on other observed variables. How to achieve these tasks? What model should we use?

Why conditional random fields?

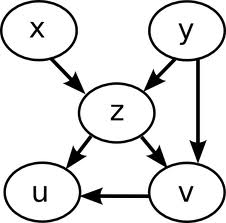

In many applications, we want the ability to predict multiple variables that depend on each other. For example, the performance of a sports team depends on the health of each member of the team. The health of each member might get affected by the travel schedule of the team. The results of the game might affect the morale of the team. The morale, in turn, might affect the health. As you can see, there are multiple variables that depend on each other intricately. Conditional Random Fields (CRFs) are very useful in modeling these problems. There are many applications which are similar to this such as classifying regions of an image, estimating the score in a strategic game, segmenting genes in a strand of DNA, extracting syntax from natural-language text, etc. In such applications, we wish to predict a vector of random variables given an observed feature vector. A natural way to represent the manner in which output variables depend on each other is provided by graphical models. Graphical models, which include model families such as Bayesian networks, neural networks, factor graphs, Markov random fields, and others, represent a complex distribution over many variables as a product of local factors on smaller subsets of variables.

Why aren’t graphical models sufficient?

Much work in learning with graphical models, especially in statistical natural-language processing, has focused on generative models that explicitly attempt to model a joint probability distribution over inputs and outputs. A generative model is a model for randomly generating observable data based on given parameters. Although there are advantages to this approach, it also has important limitations. Not only can the dimensionality of the input be very large, but the features have complex dependencies, so constructing a probability distribution over them is difficult. Modeling the dependencies among inputs can lead to intractable models. If that’s the case, why don’t we just ignore the dependencies? Will that make our life easier? Not exactly. Ignoring them will lead to reduced performance, which is definitely something we don’t want. This is where CRF comes in. Where as an ordinary classifier predicts a label for a single sample without regard to neighboring input samples, a CRF takes context into account.

Much work in learning with graphical models, especially in statistical natural-language processing, has focused on generative models that explicitly attempt to model a joint probability distribution over inputs and outputs. A generative model is a model for randomly generating observable data based on given parameters. Although there are advantages to this approach, it also has important limitations. Not only can the dimensionality of the input be very large, but the features have complex dependencies, so constructing a probability distribution over them is difficult. Modeling the dependencies among inputs can lead to intractable models. If that’s the case, why don’t we just ignore the dependencies? Will that make our life easier? Not exactly. Ignoring them will lead to reduced performance, which is definitely something we don’t want. This is where CRF comes in. Where as an ordinary classifier predicts a label for a single sample without regard to neighboring input samples, a CRF takes context into account.

An Example

Let’s consider this example. You are asked to determine the nationality of a cuisine. You are just presented with a rice based dish with no additional information. Without context, it’s hard to determine where it comes from because many different cuisines have rice as their main ingredient. Now you are presented with a few more dishes from the same cuisine. Let’s say the additional dishes are Paella, Chorizo, Torrijas, etc. Now you start understanding the pattern and realize that the original dish probably came from Spain. This is how CRF works. Instead of just blindly looking at something, it learns about the context before making any decision.

Now that we are clear on why we need CRFs, we will move on to see what exactly these CRFs are and how they are formulated. I will discuss these things in my next blog post.

————————————————————————————————-

Very nice article. I suggest you can also include a time-complexity, dataset requirements and other factors’ role while evaluating the performance. For eg – CRFs being discriminative classifiers require less dataset to train as compared to HMMs. However, CRFs are slow to train( Refer slide 8 of this link – nlp.stanford.edu/software/jenny-ner-2007.ppt ).

Also, if you would like to elaborate more on the INTRACTABILITY issue, it would give COMPLETENESS to your post 🙂 ! Thanks.

Also, check slide 23 of – http://www.cs.stanford.edu/~nmramesh/crf

This can also be included in your post.