You must have heard the term ‘kernel’ floating around quite a few times. People from many different backgrounds use it in different contexts. The thing is that this term has been applied to different things in different domains. When we talk about operating systems, we talk about which kernel is being used. Kernel is also used extensively in parallel computing and in the GPU domain, where it is the function which is called repetitively on a computing grid. It has a few other meanings in different hardware related programming fields. But in this post, I will discuss kernels as applied to machine learning. Kernels are used in machine learning to transform the data so that the classification becomes easier. One common thing in all these different definitions of the term ‘kernel’ is that it is being used as a bridge between two things. In operating systems, it is the bridge between hardware and software. In GPU domain, it is the bridge between the geometric grid and the programmer. In machine learning, it is the bridge between linearity and non-linearity. I will discuss the underlying mathematical structure in this post. So readers beware, this is a technical deep-dive.

You must have heard the term ‘kernel’ floating around quite a few times. People from many different backgrounds use it in different contexts. The thing is that this term has been applied to different things in different domains. When we talk about operating systems, we talk about which kernel is being used. Kernel is also used extensively in parallel computing and in the GPU domain, where it is the function which is called repetitively on a computing grid. It has a few other meanings in different hardware related programming fields. But in this post, I will discuss kernels as applied to machine learning. Kernels are used in machine learning to transform the data so that the classification becomes easier. One common thing in all these different definitions of the term ‘kernel’ is that it is being used as a bridge between two things. In operating systems, it is the bridge between hardware and software. In GPU domain, it is the bridge between the geometric grid and the programmer. In machine learning, it is the bridge between linearity and non-linearity. I will discuss the underlying mathematical structure in this post. So readers beware, this is a technical deep-dive.

Why do we need Kernel Functions?

If you are aware of Support Vector Machines (SVMs), you would know exactly why we would need kernel functions. If not, you can read this post to get a gist of it. As discussed in my earlier blog post about SVMs, it’s hard to classify data in the lower dimensions. The boundary line between two sets of data points becomes increasingly complex as we increase the number of classes and data points. Hence we need a way to transform this data into higher dimensions where we can draw simple hyperplanes to separate multiple classes.

What are Kernel Functions?

Kernel Functions are used in many applications to provide a simple bridge between linearity and non-linearity for algorithms. In pattern recognition, we want our algorithms to analyze and classify data efficiently. If the boundary between two sets of points is too crooked, the algorithm will take a lot of time to converge. Even when it converges, it might not be the most optimal boundary.

Kernel Functions are used in many applications to provide a simple bridge between linearity and non-linearity for algorithms. In pattern recognition, we want our algorithms to analyze and classify data efficiently. If the boundary between two sets of points is too crooked, the algorithm will take a lot of time to converge. Even when it converges, it might not be the most optimal boundary.

The main characteristic of Kernel Functions in machine learning is their distinct approach to this problem. Instead of taking the hard route of classifying the data in lower dimension by putting a really curvy line, Kernel Functions map the data into higher dimensional spaces in the hope that the data is more easily separated there. There are also no constraints on the form of this mapping, which could even lead to infinite-dimensional spaces. Now how easy is it to come up with this function? The great thing about this mapping function is that it hardly needs to be computed because of a tool called the Kernel Trick.

What is the Kernel Trick?

We have an algorithm which needs data. If the data is nicely structured, the algorithm will put a nice boundary between multiple classes. This algorithm uses do some mathematical processing on the data to come up with this boundary. Now the kernel trick is a mathematical tool which can be applied to any algorithm which solely depends on the dot product between two vectors. Now where did dot product come from? When we want to transform data into higher dimensions, we cannot just randomly project it. We don’t want to compute the mapping explicitly either. Hence we use only those algorithms which use dot products of vectors in the higher dimension to come up with the boundary. This dot product corresponds to the mathematical processing step. To compute the dot products of vectors in the higher dimension, we don’t actually have to project the data into higher dimensions. We can use a kernel function to compute the dot product directly using the lower dimension vectors.

We have an algorithm which needs data. If the data is nicely structured, the algorithm will put a nice boundary between multiple classes. This algorithm uses do some mathematical processing on the data to come up with this boundary. Now the kernel trick is a mathematical tool which can be applied to any algorithm which solely depends on the dot product between two vectors. Now where did dot product come from? When we want to transform data into higher dimensions, we cannot just randomly project it. We don’t want to compute the mapping explicitly either. Hence we use only those algorithms which use dot products of vectors in the higher dimension to come up with the boundary. This dot product corresponds to the mathematical processing step. To compute the dot products of vectors in the higher dimension, we don’t actually have to project the data into higher dimensions. We can use a kernel function to compute the dot product directly using the lower dimension vectors.

When properly applied, those candidate linear algorithms are transformed into a non-linear algorithms. Putting a curved line in the lower dimension will become equivalent to putting a linear boundary in the higher dimension. Those non-linear algorithms are equivalent to their linear originals operating in the range space of a feature space. In the context of feature space, the domain space is the lower dimension and the range space is the higher dimension. This is highly desirable because this higher-dimensional feature space could even be infinite-dimensional and thus infeasible to compute. There are also no constraints on the nature of the input vectors.

How to choose a kernel?

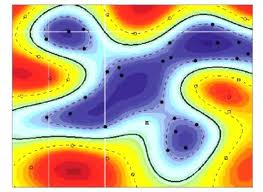

Choosing the most appropriate kernel highly depends on the problem at hand. Most of the times, fine tuning its parameters becomes a tedious and cumbersome task. The choice of a Kernel Function depends on the problem at hand because it depends on what we are trying to model. For example, a polynomial kernel allows us to model feature conjunctions up to the order of the polynomial. Feature conjunction refers to the process of fusing two or more features together. Radial basis functions allows to put circular boundaries (or hyperspheres in higher dimensions). This is useful where the data is distributed in a loop-like shape. This is in contrast with the Linear kernel, which allows only to put linear boundaries (or hyperplanes in higher dimensions). The motivation behind the choice of a particular kernel can be very intuitive and straightforward depending on what kind of information we are expecting to extract about the data.

Some popular kernels

Linear Kernel: This is one of the simplest kernel functions. It is just the inner product <x,y> plus a constant c.

Polynomial Kernel: These kernels are useful for problems where all the training data is normalized. We can use polynomial of any order depending on the case at hand.

Gaussian Kernel: This comes in the category of radial basis functions. If you know what a Gaussian looks like, you will be aware of the parameter ‘sigma’ which determines the variance of the distribution. Fine-tuning this parameter plays a major role in the performance of this kernel. If it is overestimated, the exponential will behave almost linearly and the higher-dimensional projection will start to lose its non-linear power. On the other hand, if it is underestimated, the decision boundary will be highly sensitive to noise in training data. There are a few more radial basis functions like Exponential, Laplacian, ANOVA etc. The formulations are more or less similar to this.

There are many more kernel functions like Spherical, Wavelet, Bayesian, Cauchy, Bessel etc. I cannot discuss all of them here. Depending on the problem at hand, you can look up the appropriate kernel functions. Different kernels have different advantages and disadvantages. Once you are aware of them, you can decide if a particular kernel is well suited to the problem at hand.

————————————————————————————————-

Reblogged this on #iblogstats.