This blog post is a continuation of my previous post on ridgelet analysis. Motivated by the problem of finding efficient representation of objects, people introduced yet another representation system called the curvelet transform. This is very efficient in representing objects that have discontinuities along curves, and compressing image data as well. Curvelets are a non-adaptive technique for multi-scale object representation. Why do we need this? Are they more efficient that ridgelets?

This blog post is a continuation of my previous post on ridgelet analysis. Motivated by the problem of finding efficient representation of objects, people introduced yet another representation system called the curvelet transform. This is very efficient in representing objects that have discontinuities along curves, and compressing image data as well. Curvelets are a non-adaptive technique for multi-scale object representation. Why do we need this? Are they more efficient that ridgelets?

What are curvelets?

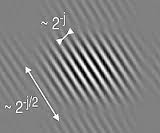

Being an extension of the wavelet concept, they are becoming popular in image processing and scientific computing. Like ridgelets, curvelets occur at all scales, locations, and orientations. However, where as ridgelets have unit length, curvelets occur at all dyadic lengths. They also exhibit anisotropy as well, which keeps increasing with decreasing scale. In short, curvelets obey a scaling relation which says that the width of a curvelet element is about the square of its length. Conceptually, we may think of the curvelet transform as a multiscale pyramid with many directions and positions at each length scale, and needle-shaped elements at fine scales. This is going one step beyond what ridgelets offer. Now we are stretching the signal at various levels to see if we can see something useful.

Curvelets vs Wavelets

Wavelets generalize the Fourier transform by using a basis that represents both location and spatial frequency. Fourier transform, if you recollect, just gives the contents of the signal without telling us where those contents are. It’s similar to telling us that both New York and Los Angeles are present in USA, but not telling us where exactly they are within the country! For 2D or 3D signals, directional wavelet transforms go further, by using basis functions that are also localized in orientation. It means that directional wavelet transform not only tells us about the content and location, but also about the orientation at that point. It’s similar to telling us about the shape of those cities.

A curvelet transform differs from other directional wavelet transforms in that the degree of localization in orientation varies with scale. In particular, fine-scale basis functions are long ridges. The fine-scale bases are skinny ridges with a precisely determined orientation. When we use a microscope to look at objects, everything gets magnified equally. Sometimes, we don’t need everything to be magnified equally because the shape of the object dictates the amount of magnification in each direction. This is where curvelets come in. Instead of trying to fit everything in a uniform circle, it’s looking at the anisotropic objects and getting a container shape just for that object.

Where are they used?

Curvelets are an appropriate basis for representing images which are smooth apart from singularities along smooth curves, where the curves have bounded curvature, i.e. where objects in the image have a minimum length scale. This property holds for cartoons, geometrical diagrams, and text. As one zooms in on such images, the edges they contain appear increasingly straight. Curvelets take advantage of this property, by defining the higher resolution curvelets to be skinnier than the lower resolution curvelets. However, natural images do not have this property. They have detail at every scale. Therefore, for natural images, it is preferable to use some sort of directional wavelet transform whose wavelets have the same aspect ratio at every scale.

When the image is of the right type, curvelets provide a representation that is considerably sparser than other wavelet transforms. This can be quantified by considering the best approximation of a geometrical test image that can be represented using only wavelets, and analyzing the approximation error.

————————————————————————————————-

hi,

can u send me matlab codes ragarding image compression using curvelet transfrom

my project is based on this . my mail id is chandreshsir@gmail.com

Hey! This is my first visit to your blog! We are a collection of volunteers and starting

a new project in a community in the same niche. Your blog

provided us useful information to work on. You have done a extraordinary job!