Principal Component Analysis (PCA) is one of most useful tools in the field of pattern recognition. Let’s say you are making a list of people and collecting information about their physical attributes. Some of the more common attributes include height, weight, chest, waist and biceps. If you store 5 attributes per person, it is equivalent to storing a 5-dimensional feature vector. If you generalize it for ‘n’ different attributes, you are constructing an n-dimensional feature vector. Now you may want to analyze this data and cluster people into different categories based on these attributes. PCA comes into picture when have a set of datapoints which are multidimensional feature vectors and the dimensionality is high. If you want to analyze the patterns in our earlier example, it’s quite simple because it’s just a 5-dimensional feature vector. In real-life systems, the dimensionality is really high (often in hundreds or thousands) and it becomes very complex and time-consuming to analyze such data. What should we do now?

Principal Component Analysis (PCA) is one of most useful tools in the field of pattern recognition. Let’s say you are making a list of people and collecting information about their physical attributes. Some of the more common attributes include height, weight, chest, waist and biceps. If you store 5 attributes per person, it is equivalent to storing a 5-dimensional feature vector. If you generalize it for ‘n’ different attributes, you are constructing an n-dimensional feature vector. Now you may want to analyze this data and cluster people into different categories based on these attributes. PCA comes into picture when have a set of datapoints which are multidimensional feature vectors and the dimensionality is high. If you want to analyze the patterns in our earlier example, it’s quite simple because it’s just a 5-dimensional feature vector. In real-life systems, the dimensionality is really high (often in hundreds or thousands) and it becomes very complex and time-consuming to analyze such data. What should we do now?

Why do we need PCA?

In our earlier example, if I ask you to separate people into 3 different categories, you will look at all the 5 attributes and separate them. Now what if I ask you to pick only two features and separate them based on these? One of the more intuitive ways is to pick up those two features which are the most discriminative. If you look at the values, you will realize that the values of chest, waist and biceps of all the people are a bit close to each other. On the other hand, the values of height and weight vary widely. So you pick these two features and discard the rest, while still being confident that the your separation method will still give good results. PCA achieves exactly this, but only in a much better way! When you have a large number of datapoints with high dimensionality, you cannot sit and look through each and every feature and decide which one to pick. Most of the times, the features may not be directly discriminative themselves. Sometimes, a combination of features is more discriminative. PCA helps us reorganize the data in such a way that we can pick the top ‘k’ features and be confident that they will be the most discriminative.

What is PCA?

It is a way of identifying patterns in data, and expressing the data in such a way so as to highlight their similarities and differences. When the dimensionality is high, the luxury of graphical representation is not available. Since patterns in data can be hard to find in such cases, PCA is a powerful tool for analyzing the given data. The other main advantage of PCA is that once you have found these patterns in the data, you can compress the data without much loss of information. This is achieved by reducing the number of dimensions by discarding the less discriminative features. This technique used in image compression, feature construction, speeding up algorithms etc.

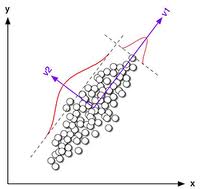

Consider the image shown here. You have to analyze the points on the plane and extract patterns. If you are asked to pick a dimension so that you easily separate them, which one would you pick? You will pick the horizontal direction because the datapoints are spread wider in this direction. I have discussed more about data distribution and standard deviation here. What happens when the points are spread neither in horizontal nor vertical direction?

Consider the image shown here. You have to analyze the points on the plane and extract patterns. If you are asked to pick a dimension so that you easily separate them, which one would you pick? You will pick the horizontal direction because the datapoints are spread wider in this direction. I have discussed more about data distribution and standard deviation here. What happens when the points are spread neither in horizontal nor vertical direction?

Consider the image shown here. If the datapoints are spread like this, neither the horizontal nor the vertical direction will give us the most optimal separation. Instead, the slant line is the direction along which the points are most separated. PCA helps us find this line. It also finds the second most separable direction too. One condition is that all these directions must be orthogonal to each other. In general, for n-dimensional feature vectors, it finds ‘n’ directions. These ‘n’ directions are called eigenvectors. When you use PCA on some data, you get eigenvectors along with eigenvalues. The magnitude of an eigenvalue tells us how significant the corresponding eigenvector is. If you arrange the eigenvalues in decreasing order, you will get the eigenvectors arranged from the most significant to the least significant.

Consider the image shown here. If the datapoints are spread like this, neither the horizontal nor the vertical direction will give us the most optimal separation. Instead, the slant line is the direction along which the points are most separated. PCA helps us find this line. It also finds the second most separable direction too. One condition is that all these directions must be orthogonal to each other. In general, for n-dimensional feature vectors, it finds ‘n’ directions. These ‘n’ directions are called eigenvectors. When you use PCA on some data, you get eigenvectors along with eigenvalues. The magnitude of an eigenvalue tells us how significant the corresponding eigenvector is. If you arrange the eigenvalues in decreasing order, you will get the eigenvectors arranged from the most significant to the least significant.

Where is it used in real life?

In a real life situation, we extract features and analyze them. The time taken to analyze those features depends on the number of features. But if we extract lesser number of features, we might lose vital information. Hence PCA is used to reduce the dimensionality and extract the most significant components. PCA is used when you want to compress data as well. Discarding a few dimensions means that you have to store less amount of data without losing too much information. It is used in image compression, face recognition, object recognition, augmented reality and many other fields where pattern recognition is used.

————————————————————————————————-

One thought on “Principal Component Analysis”